Researchers from IBM and the University of Melbourne have developed a proof-of-concept seizure forecasting system that predicted an average of 69 percent of seizures across 10 epilepsy patients in a dataset.

copyright by www.zdnet.com

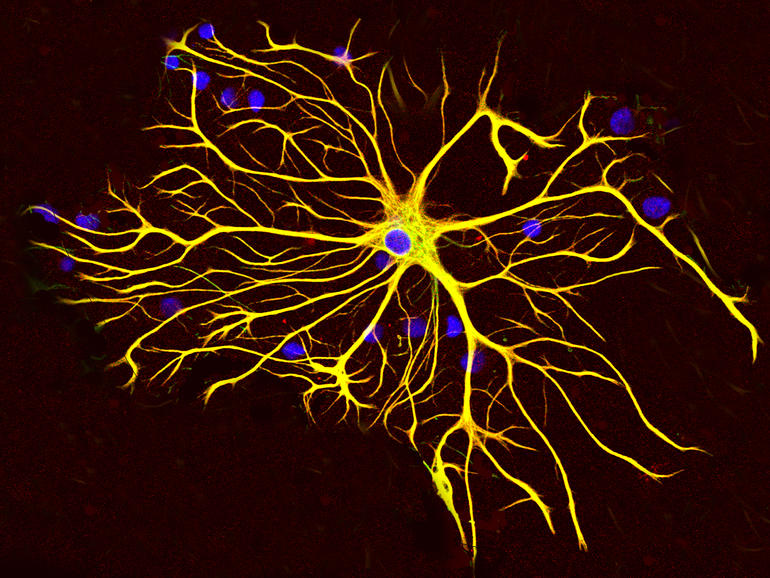

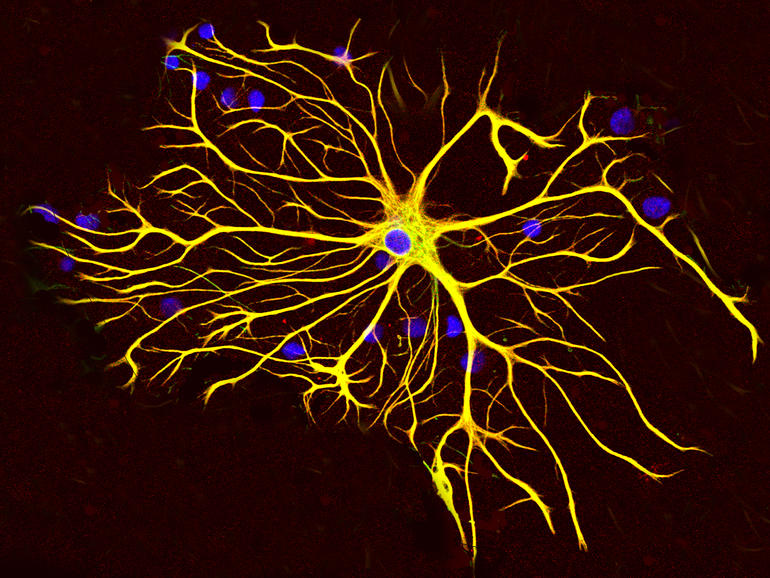

The system, which the scientists claim is “fully automated, patient-specific, and tunable to an individual’s needs”, uses a combination of deep-learning algorithms and a low-power “brain-inspired” computing chip to predict when seizures might occur, even if patients have no previous prediction indicators.

The system, which the scientists claim is “fully automated, patient-specific, and tunable to an individual’s needs”, uses a combination of deep-learning algorithms and a low-power “brain-inspired” computing chip to predict when seizures might occur, even if patients have no previous prediction indicators.

For the proof of concept, the researchers borrowed 60 days of data per patient from an earlier study conducted by the University of Melbourne and St Vincent’s Hospital, achieving a 69 percent average prediction rate despite the algorithm having no knowledge of future data. This allowed the researchers to simulate how the system could operate in real-world scenarios, and the algorithms were retrained in response to an individual’s long-term brain signal changes.

“To date, much of the research has been limited to training algorithms based on general patterns for seizures … for example, doctors manually selected signs and patterns which could preempt seizures, which were then used to train prediction algorithms. However, these researchers were limited in their ability to reliably predict seizures across all patients in a long-term fashion, given brain activity patterns are not only specific to an individual but also change over time,” the Epileptic Seizure Prediction using Big Data and Deep Learning: Toward a Mobile System report states.

“New deep-learning techniques have helped us improve from previous results, allowing the system to automatically identify seizure patterns for individual patients and adapt to changing brain signals over time, without additional human involvement.”

The seizure prediction system, which was designed to be adjustable to when and how an epilepsy patient would prefer to be alerted of an upcoming seizure, was deployed on an ultra-low-power neuromorphic computing chip to demonstrate potential applicability in the real world as a smart wearable. […]

read more – copyright by www.zdnet.com

Researchers from IBM and the University of Melbourne have developed a proof-of-concept seizure forecasting system that predicted an average of 69 percent of seizures across 10 epilepsy patients in a dataset.

copyright by www.zdnet.com

For the proof of concept, the researchers borrowed 60 days of data per patient from an earlier study conducted by the University of Melbourne and St Vincent’s Hospital, achieving a 69 percent average prediction rate despite the algorithm having no knowledge of future data. This allowed the researchers to simulate how the system could operate in real-world scenarios, and the algorithms were retrained in response to an individual’s long-term brain signal changes.

“To date, much of the research has been limited to training algorithms based on general patterns for seizures … for example, doctors manually selected signs and patterns which could preempt seizures, which were then used to train prediction algorithms. However, these researchers were limited in their ability to reliably predict seizures across all patients in a long-term fashion, given brain activity patterns are not only specific to an individual but also change over time,” the Epileptic Seizure Prediction using Big Data and Deep Learning: Toward a Mobile System report states.

“New deep-learning techniques have helped us improve from previous results, allowing the system to automatically identify seizure patterns for individual patients and adapt to changing brain signals over time, without additional human involvement.”

The seizure prediction system, which was designed to be adjustable to when and how an epilepsy patient would prefer to be alerted of an upcoming seizure, was deployed on an ultra-low-power neuromorphic computing chip to demonstrate potential applicability in the real world as a smart wearable. […]

read more – copyright by www.zdnet.com

Share this: