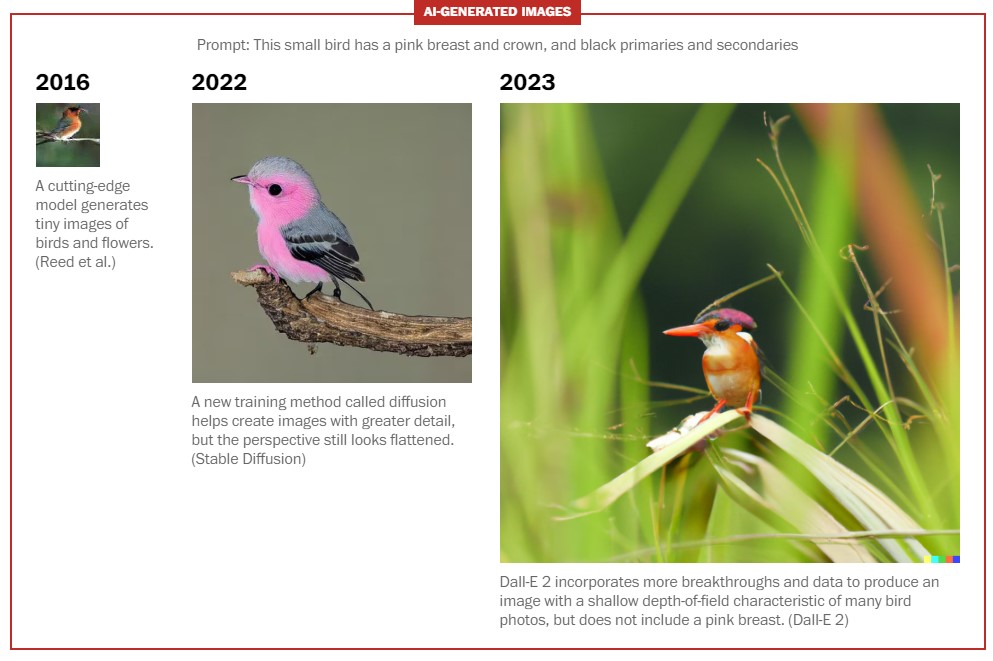

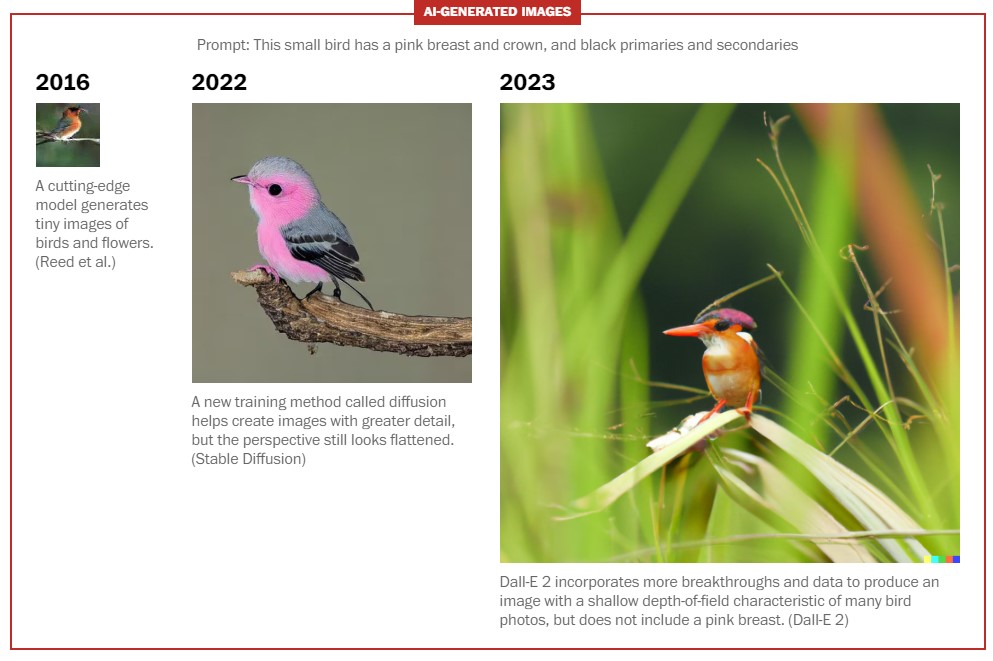

The Washington Post asked three AI systems to generate content using the same prompt. The results illustrate how quickly the technology has advanced.

Copyright: washingtonpost.com – “See why AI like ChatGPT has gotten so good, so fast”

Artificial intelligence has become shockingly capable in the past year. The latest chatbots (like ChatGPT) can conduct fluid conversations, craft poems, even write lines of computer code while the latest image-makers can create fake “photos” that are virtually indistinguishable from the real thing.

Artificial intelligence has become shockingly capable in the past year. The latest chatbots (like ChatGPT) can conduct fluid conversations, craft poems, even write lines of computer code while the latest image-makers can create fake “photos” that are virtually indistinguishable from the real thing.

It wasn’t always this way. As recently as two years ago, AI created robotic text riddled with errors. Images were tiny, pixelated and lacked artistic appeal. The mere suggestion that AI might one day rival human capability and talent drew ridicule from academics.

A confluence of innovations has spurred growth. Breakthroughs in mathematical modeling, improvements in hardware and computing power, and the emergence of massive high-quality data sets have supercharged generative AI tools.

While artificial intelligence is likely to improve even further, experts say the past two years have been uniquely fertile. Here’s how it all happened so fast.

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

A training transformation

Much of this recent growth stems from a new way of training AI, called the Transformers model. This method allows the technology to process large blocks of language quickly and to test the fluency of the outcome.

It originated in a 2017 Google study that quickly became one of the field’s most influential pieces of research.

To understand how the model works, consider a simple sentence: “The cat went to the litter box.”

Previously, artificial intelligence models would analyze the sentence sequentially, processing the word “the” before moving onto “cat” and so on. This took time, and the software would often forget its earlier learning as it read new sentences, said Mark Riedl, a professor of computing at Georgia Tech.[…]

Read more: www.washingtonpost.com

The Washington Post asked three AI systems to generate content using the same prompt. The results illustrate how quickly the technology has advanced.

Copyright: washingtonpost.com – “See why AI like ChatGPT has gotten so good, so fast”

It wasn’t always this way. As recently as two years ago, AI created robotic text riddled with errors. Images were tiny, pixelated and lacked artistic appeal. The mere suggestion that AI might one day rival human capability and talent drew ridicule from academics.

A confluence of innovations has spurred growth. Breakthroughs in mathematical modeling, improvements in hardware and computing power, and the emergence of massive high-quality data sets have supercharged generative AI tools.

While artificial intelligence is likely to improve even further, experts say the past two years have been uniquely fertile. Here’s how it all happened so fast.

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

A training transformation

Much of this recent growth stems from a new way of training AI, called the Transformers model. This method allows the technology to process large blocks of language quickly and to test the fluency of the outcome.

It originated in a 2017 Google study that quickly became one of the field’s most influential pieces of research.

To understand how the model works, consider a simple sentence: “The cat went to the litter box.”

Previously, artificial intelligence models would analyze the sentence sequentially, processing the word “the” before moving onto “cat” and so on. This took time, and the software would often forget its earlier learning as it read new sentences, said Mark Riedl, a professor of computing at Georgia Tech.[…]

Read more: www.washingtonpost.com

Share this: