No, the patterns computers create are not an inherent property of data, they are an emergent property of the structure of the program itself.

Copyright: zdnet.com – “AI: The pattern is not in the data, it’s in the machine”

It’s a commonplace of artificial intelligence to say that machine learning, which depends on vast amounts of data, functions by finding patterns in data.

It’s a commonplace of artificial intelligence to say that machine learning, which depends on vast amounts of data, functions by finding patterns in data.

The phrase, “finding patterns in data,” in fact, has been a staple phrase of things such as data mining and knowledge discovery for years now, and it has been assumed that machine learning, and its deep learning variant especially, are just continuing the tradition of finding such patterns.

AI programs do, indeed, result in patterns, but, just as “The fault, dear Brutus, lies not in our stars but in ourselves,” the fact of those patterns is not something in the data, it is what the AI program makes of the data.

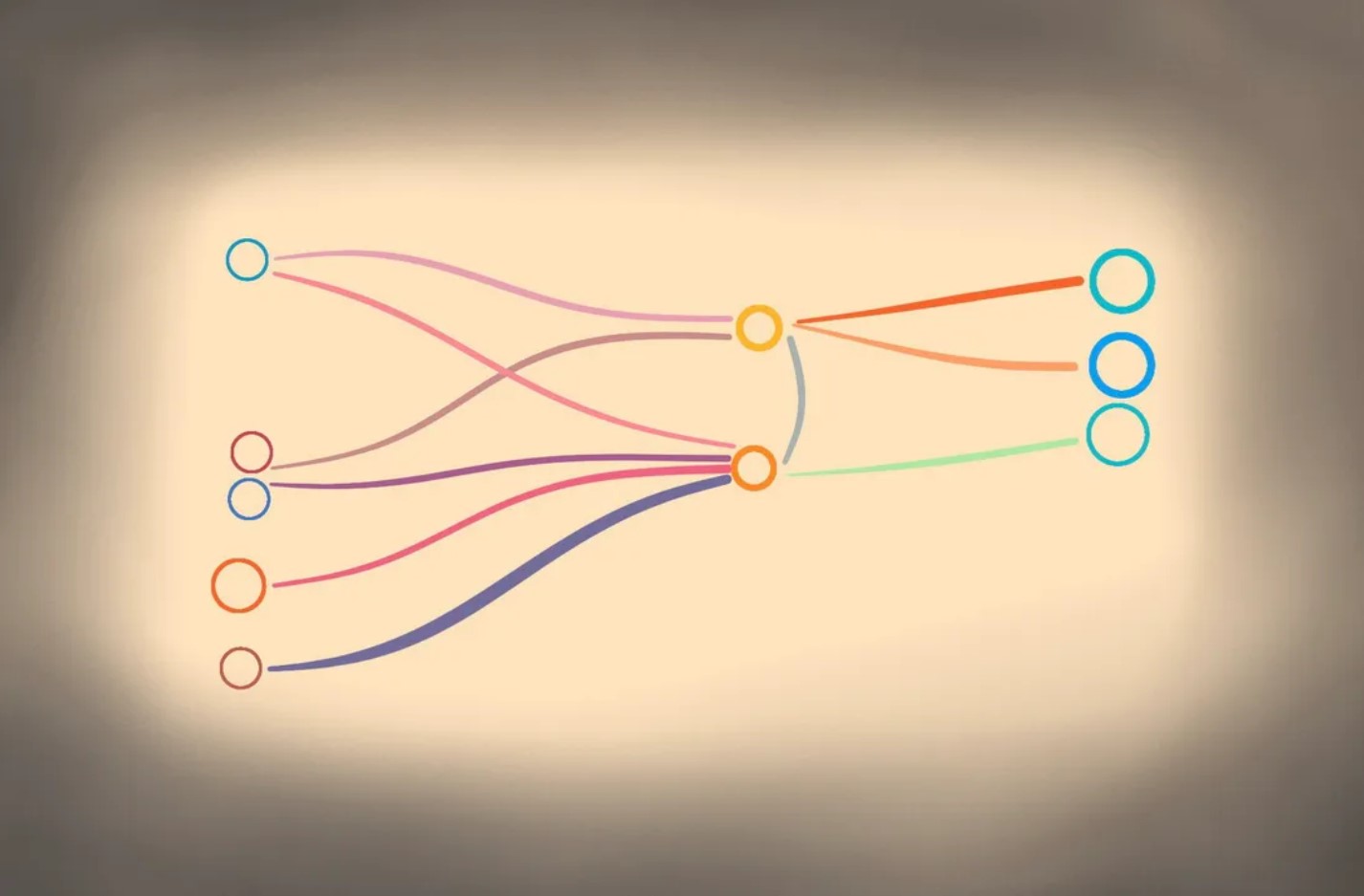

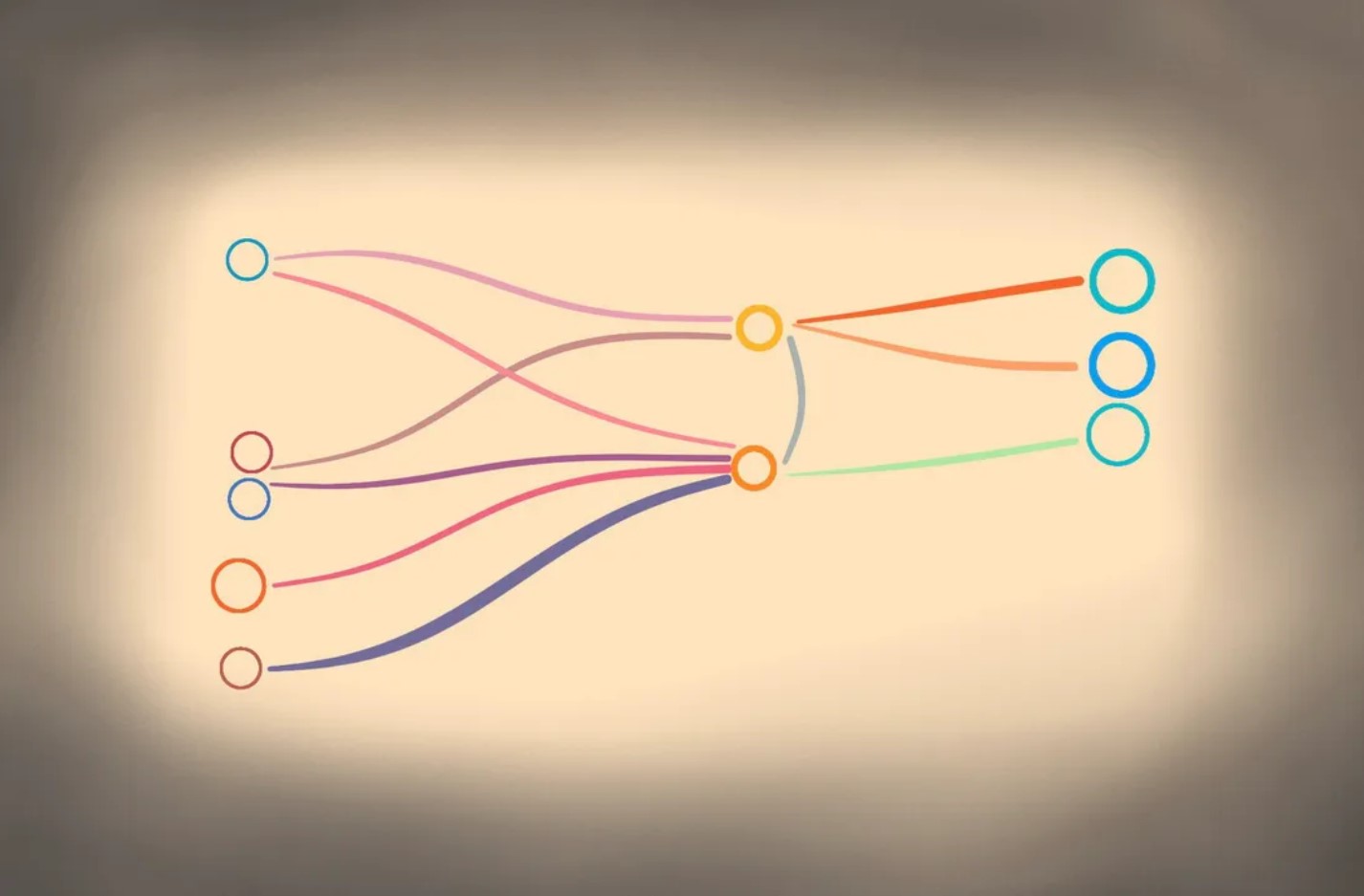

Almost all machine learning models function via a learning rule that changes the so-called weights, also known as parameters, of the program as the program is fed examples of data, and, possibly, labels attached to that data. It is the value of the weights that counts as “knowing” or “understanding.”

The pattern that is being found is really a pattern of how weights change. The weights are simulating how real neurons are believed to “fire”, the principle formed by psychologist Donald O. Hebb, which became known as Hebbian learning, the idea that “neurons that fire together, wire together.”

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

It is the pattern of weight changes that is the model for learning and understanding in machine learning, something the founders of deep learning emphasized. As expressed almost forty years ago, in one of the foundational texts of deep learning, Parallel Distributed Processing, Volume I, James McClelland, David Rumelhart, and Geoffrey Hinton wrote,

What is stored is the connection strengths between units that allow these patterns to be created […] If the knowledge is the strengths of the connections, learning must be a matter of finding the right connection strengths so that the right patterns of activation will be produced under the right circumstances.

McClelland, Rumelhart, and Hinton were writing for a select audience, cognitive psychologists and computer scientists, and they were writing in a very different age, an age when people didn’t make easy assumptions that anything a computer did represented “knowledge.” They were laboring at a time when AI programs couldn’t do much at all, and they were mainly concerned with how to produce a computation, any computation, from a fairly limited arrangement of transistors. […]

Read more: www.zdnet.com

No, the patterns computers create are not an inherent property of data, they are an emergent property of the structure of the program itself.

Copyright: zdnet.com – “AI: The pattern is not in the data, it’s in the machine”

The phrase, “finding patterns in data,” in fact, has been a staple phrase of things such as data mining and knowledge discovery for years now, and it has been assumed that machine learning, and its deep learning variant especially, are just continuing the tradition of finding such patterns.

AI programs do, indeed, result in patterns, but, just as “The fault, dear Brutus, lies not in our stars but in ourselves,” the fact of those patterns is not something in the data, it is what the AI program makes of the data.

Almost all machine learning models function via a learning rule that changes the so-called weights, also known as parameters, of the program as the program is fed examples of data, and, possibly, labels attached to that data. It is the value of the weights that counts as “knowing” or “understanding.”

The pattern that is being found is really a pattern of how weights change. The weights are simulating how real neurons are believed to “fire”, the principle formed by psychologist Donald O. Hebb, which became known as Hebbian learning, the idea that “neurons that fire together, wire together.”

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

It is the pattern of weight changes that is the model for learning and understanding in machine learning, something the founders of deep learning emphasized. As expressed almost forty years ago, in one of the foundational texts of deep learning, Parallel Distributed Processing, Volume I, James McClelland, David Rumelhart, and Geoffrey Hinton wrote,

McClelland, Rumelhart, and Hinton were writing for a select audience, cognitive psychologists and computer scientists, and they were writing in a very different age, an age when people didn’t make easy assumptions that anything a computer did represented “knowledge.” They were laboring at a time when AI programs couldn’t do much at all, and they were mainly concerned with how to produce a computation, any computation, from a fairly limited arrangement of transistors. […]

Read more: www.zdnet.com

Share this: