Researchers from the RIKEN Center for Advanced Intelligence Project ( AIP ) in Japan have shown that a deep-learning algorithm can be used to extract interpretable features from annotation-free histopathology images from prostate cancer patients.

Copyright by physicsworld.com

Their framework outperformed the prediction of biochemical recurrence using conventional, Gleason Score-based methods.

Their framework outperformed the prediction of biochemical recurrence using conventional, Gleason Score-based methods.

Prostate cancer is the second most common cancer affecting men worldwide, with an incidence rate of 13.5%, according to the World Health Organization. Expert pathologists diagnose this type of cancer through a transrectal biopsy, following the results of a prostate specific antigen (PSA) test. The extracted samples of tissue are examined under a microscope and, if cancerous cells are found, divided into risk groups assigned through the Gleason Score. This grading system is considered the gold standard in cancer medicine, as it determines the aggressiveness of prostate cancer and helps doctors establish the right course of treatment.

This type of diagnostic pathology, however, requires expert knowledge, is time consuming and can suffer from inter-observer variability. Even though automated machine learning tools capable of accurately classifying histopathology images exist, these methods have not yet gained clinical approval – mainly because deep-learning algorithms suffer from a lack of interpretability, making their decisions hard to visualize or even explain.

AI-generated features deliver high accuracy

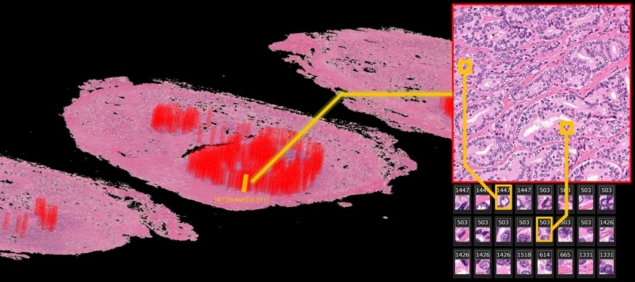

Paving the way towards interpretable clinical analyses, the group developed an artificial intelligence (AI) framework capable of acquiring interpretable features from annotation-free histopathological images. To achieve this, the researchers used whole-mount pathology images acquired at three different centres. This included images from 842 patients at Nippon Medical School Hospital (NMSH), plus 95 patients from St. Marianna University hospital (SMH) and Aichi Medical University Hospital (AMH).

The team used images from 100 patients at NMSH to extract the features, while the rest of the NMSH dataset was used to validate their method. Lead researcher Yoichiro Yamamoto and his team trained two unsupervised deep neural networks, known as deep autoencoders, to reduce the 10-billion-scale pixel data into 100 features. They achieved this by using both low-resolution and high-resolution histopathology image patches, inspired by the diagnostic process of pathologists. The computation was performed on AIP’s RAIDEN supercomputer.

To validate their work, the researchers used the 100 generated features to predict cancer recurrence in the remaining NMSH dataset. At the same time, they used the human-established cancer criteria, the Gleason score, to make the same predictions. Their results showed that predictions of biochemical recurrence were more accurate with AI-generated features, than when using the conventional method.

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

Moreover, when combining the Gleason score with the machine-generated features, the accuracy further increased. Furthermore, this framework delivered similar accuracies when used with the SMH and AMH datasets, revealing the potential for broad use.

Finally, the researchers evaluated the AI-generated features. They retrieved the most representative images for each of the features and asked an expert pathologist to examine them. “In summary”, they say, “the pathologist found that the deep neural networks appeared to have mastered the basic concept of the Gleason score fully automatically, generating explainable key features that could be understood by pathologists.”

The researchers point out that the deep neural networks identified features of stroma in the non-cancerous area as prognostic factors, and that such features typically have not been evaluated in prostate histopathological images. This is a key result as it raises the possibility of a tool capable of discovering new and uncharted disease characteristics. As future work, the team plan to further validate the framework by conducting clinical trials and by applying it to other diseases including rare cancers.

Read more – physicsworld.com

Researchers from the RIKEN Center for Advanced Intelligence Project ( AIP ) in Japan have shown that a deep-learning algorithm can be used to extract interpretable features from annotation-free histopathology images from prostate cancer patients.

Copyright by physicsworld.com

Prostate cancer is the second most common cancer affecting men worldwide, with an incidence rate of 13.5%, according to the World Health Organization. Expert pathologists diagnose this type of cancer through a transrectal biopsy, following the results of a prostate specific antigen (PSA) test. The extracted samples of tissue are examined under a microscope and, if cancerous cells are found, divided into risk groups assigned through the Gleason Score. This grading system is considered the gold standard in cancer medicine, as it determines the aggressiveness of prostate cancer and helps doctors establish the right course of treatment.

This type of diagnostic pathology, however, requires expert knowledge, is time consuming and can suffer from inter-observer variability. Even though automated machine learning tools capable of accurately classifying histopathology images exist, these methods have not yet gained clinical approval – mainly because deep-learning algorithms suffer from a lack of interpretability, making their decisions hard to visualize or even explain.

AI-generated features deliver high accuracy

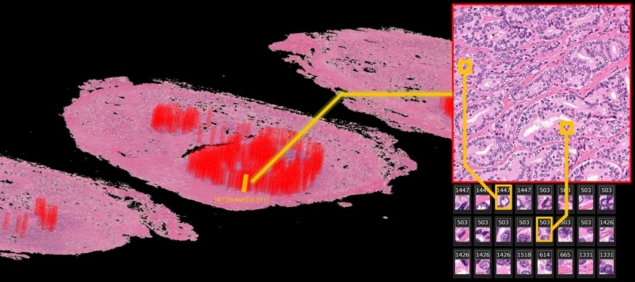

Paving the way towards interpretable clinical analyses, the group developed an artificial intelligence (AI) framework capable of acquiring interpretable features from annotation-free histopathological images. To achieve this, the researchers used whole-mount pathology images acquired at three different centres. This included images from 842 patients at Nippon Medical School Hospital (NMSH), plus 95 patients from St. Marianna University hospital (SMH) and Aichi Medical University Hospital (AMH).

The team used images from 100 patients at NMSH to extract the features, while the rest of the NMSH dataset was used to validate their method. Lead researcher Yoichiro Yamamoto and his team trained two unsupervised deep neural networks, known as deep autoencoders, to reduce the 10-billion-scale pixel data into 100 features. They achieved this by using both low-resolution and high-resolution histopathology image patches, inspired by the diagnostic process of pathologists. The computation was performed on AIP’s RAIDEN supercomputer.

To validate their work, the researchers used the 100 generated features to predict cancer recurrence in the remaining NMSH dataset. At the same time, they used the human-established cancer criteria, the Gleason score, to make the same predictions. Their results showed that predictions of biochemical recurrence were more accurate with AI-generated features, than when using the conventional method.

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

Moreover, when combining the Gleason score with the machine-generated features, the accuracy further increased. Furthermore, this framework delivered similar accuracies when used with the SMH and AMH datasets, revealing the potential for broad use.

Finally, the researchers evaluated the AI-generated features. They retrieved the most representative images for each of the features and asked an expert pathologist to examine them. “In summary”, they say, “the pathologist found that the deep neural networks appeared to have mastered the basic concept of the Gleason score fully automatically, generating explainable key features that could be understood by pathologists.”

The researchers point out that the deep neural networks identified features of stroma in the non-cancerous area as prognostic factors, and that such features typically have not been evaluated in prostate histopathological images. This is a key result as it raises the possibility of a tool capable of discovering new and uncharted disease characteristics. As future work, the team plan to further validate the framework by conducting clinical trials and by applying it to other diseases including rare cancers.

Read more – physicsworld.com

Share this: