A new approach to object identification, developed by researchers at Duke University and the Institut de Physique de Nice (INPHYNI), enables joint learning of optimal measurement strategies and a matching processing algorithm, and uses inferred knowledge about task, scene, and measurement constraints to improve the accuracy of object recognition tasks while using a limited number of measurements.

Copyright by www.photonics.com

The approach uses microwave patterns to increase accuracy and reduce computing time and power requirements.

The approach uses microwave patterns to increase accuracy and reduce computing time and power requirements.

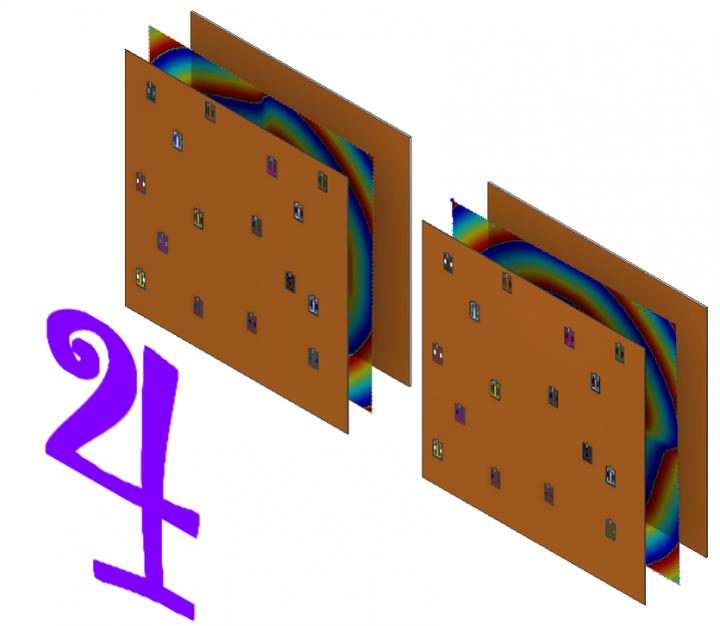

The researchers used a metamaterial antenna that can sculpt a microwave wavefront into various shapes. The metamaterial is composed of an 8 × 8 grid of squares. Each square contains electronic structures that allow the square to be dynamically tuned to either block or transmit microwaves.

For each measurement the system takes, an intelligent sensor selects which squares will allow microwaves to pass through. This creates a unique microwave pattern, which bounces off the object to be identified and returns to a different, but similar, metamaterial antenna.

The sensing antenna also uses a pattern of active squares, which adds more options for shaping the reflected waves. The computer analyzes the incoming signal and attempts to identify the object.

By repeating this process thousands of times for a number of variations, the machine learning algorithm eventually discovers which pieces of information are the most important and which settings on both the sending and receiving antennas are the best at gathering them.

“The transmitter and receiver act together,” researcher Mohammadreza Imani said. “They are jointly designed and optimized to capture the features relevant to the task at hand.”

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

“If you know your task, and you know what sort of scene to expect, you may not need to capture all the information possible,” researcher Philipp del Hougne said. “This codesign of measurement and processing allows us to make use of all the a priori knowledge that we have about the task, scene, and measurement constraints to optimize the entire sensing process.”

The new machine learning approach removes the need for humans to create images for the system to analyze. Instead, it analyzes pure data directly and determines the optimal hardware settings for determining what data is most important. After training, the machine learning algorithm can land on a small group of settings that help it separate the good data from the bad, reducing the number of measurements, time, and computational power needed. Instead of the hundreds or even thousands of measurements typically required by traditional microwave imaging systems, the new machine learning method can identify an object using fewer than 10 measurements.

“Object identification schemes typically take measurements and go to all this trouble to make an image for people to look at and appreciate,” professor Roarke Horstmeyer said. “But that’s inefficient because the computer doesn’t need to ‘look’ at an image at all.”

“This approach circumvents that step and allows the program to capture details that an image-forming process might miss, while ignoring other details of the scene that it doesn’t need,” researcher Aaron Diebold said. “We’re basically trying to see the object directly from the eyes of the machine.”

Whether this level of improvement will scale up to more complicated sensing applications is an open question; but the researchers are already trying to use their new concept to optimize hand-motion and gesture recognition for next-generation computer interfaces. The small size, low cost, and easy manufacturability of microwave metamaterials make them promising candidates for future devices. […]

Read more – www.photonics.com

A new approach to object identification, developed by researchers at Duke University and the Institut de Physique de Nice (INPHYNI), enables joint learning of optimal measurement strategies and a matching processing algorithm, and uses inferred knowledge about task, scene, and measurement constraints to improve the accuracy of object recognition tasks while using a limited number of measurements.

Copyright by www.photonics.com

The researchers used a metamaterial antenna that can sculpt a microwave wavefront into various shapes. The metamaterial is composed of an 8 × 8 grid of squares. Each square contains electronic structures that allow the square to be dynamically tuned to either block or transmit microwaves.

For each measurement the system takes, an intelligent sensor selects which squares will allow microwaves to pass through. This creates a unique microwave pattern, which bounces off the object to be identified and returns to a different, but similar, metamaterial antenna.

The sensing antenna also uses a pattern of active squares, which adds more options for shaping the reflected waves. The computer analyzes the incoming signal and attempts to identify the object.

By repeating this process thousands of times for a number of variations, the machine learning algorithm eventually discovers which pieces of information are the most important and which settings on both the sending and receiving antennas are the best at gathering them.

“The transmitter and receiver act together,” researcher Mohammadreza Imani said. “They are jointly designed and optimized to capture the features relevant to the task at hand.”

Thank you for reading this post, don't forget to subscribe to our AI NAVIGATOR!

“If you know your task, and you know what sort of scene to expect, you may not need to capture all the information possible,” researcher Philipp del Hougne said. “This codesign of measurement and processing allows us to make use of all the a priori knowledge that we have about the task, scene, and measurement constraints to optimize the entire sensing process.”

The new machine learning approach removes the need for humans to create images for the system to analyze. Instead, it analyzes pure data directly and determines the optimal hardware settings for determining what data is most important. After training, the machine learning algorithm can land on a small group of settings that help it separate the good data from the bad, reducing the number of measurements, time, and computational power needed. Instead of the hundreds or even thousands of measurements typically required by traditional microwave imaging systems, the new machine learning method can identify an object using fewer than 10 measurements.

“Object identification schemes typically take measurements and go to all this trouble to make an image for people to look at and appreciate,” professor Roarke Horstmeyer said. “But that’s inefficient because the computer doesn’t need to ‘look’ at an image at all.”

“This approach circumvents that step and allows the program to capture details that an image-forming process might miss, while ignoring other details of the scene that it doesn’t need,” researcher Aaron Diebold said. “We’re basically trying to see the object directly from the eyes of the machine.”

Whether this level of improvement will scale up to more complicated sensing applications is an open question; but the researchers are already trying to use their new concept to optimize hand-motion and gesture recognition for next-generation computer interfaces. The small size, low cost, and easy manufacturability of microwave metamaterials make them promising candidates for future devices. […]

Read more – www.photonics.com

Share this: